Can You Do Video To Video To Lipsync On Hedra

Okay, so I was messing around the other day, you know, one of those deep dives into the internet rabbit hole that starts with "what's new in AI art?" and ends with me questioning the very fabric of reality and whether my cat secretly understands quantum physics. Anyway, I stumbled across this thing called "Hedra," and my brain, which is perpetually fueled by caffeine and a healthy dose of skepticism, immediately perked up. It sounded… futuristic. Like something straight out of a sci-fi flick where people communicate solely through perfectly synced, AI-generated lip movements.

Naturally, my first thought, after the usual existential dread subsided, was: Can you actually do video-to-video-to-lipsync on Hedra? It's a mouthful, I know. Imagine it: you feed it a video of someone talking, and then you give it another video, maybe of your favorite celebrity or even yourself doing a silly dance, and you want Hedra to take the audio from the first video and make the second video’s person’s lips move to that audio. And not just vaguely flap around, but like, actually match the words. Sounds like a dream, right? Or maybe a nightmare for anyone trying to prove their identity online. Heh.

So, let's break this down, shall we? Because my curiosity, much like my screen time, is often a little… excessive. We're talking about taking existing video content and morphing it, using AI, to mimic speech from another source. This isn't just about slapping a funny mustache on someone anymore. We're talking about deep fakes, but with a specific focus on mouth movements. And the big question on everyone's mind (or at least, my mind, and hopefully yours now too) is whether Hedra is the magic bullet for this particular brand of digital wizardry.

Must Read

The Rise of the AI Mimics

It feels like just yesterday we were marveling at AI that could generate a static image from a text prompt. Now? We've got AI that can write poetry, compose music, and, yes, create entirely new videos. The pace of innovation is, frankly, a little dizzying. And within this explosion of creative AI, the ability to manipulate video, especially to achieve realistic lipsync, has become a huge area of focus. Think about it: the potential applications are… well, they’re everywhere.

Imagine historical footage where figures could speak modern languages, perfectly synced. Or educational videos where complex topics are explained by animated characters with impeccable diction. Or, you know, you finally being able to make that meme of your dog singing the entirety of Bohemian Rhapsody a reality. The possibilities, both wonderful and… less wonderful, are endless. And as these tools become more accessible, the question of “can it be done?” becomes more pressing.

Now, when we talk about "video-to-video-to-lipsync," we're essentially describing a multi-stage process. First, you have your source video with the audio you want to use. This is the "what to say" part. Then, you have your target video – the person or character whose lips you want to move. This is the "who to say it with" part. The AI then needs to analyze the audio, understand the phonemes (the basic sounds of speech), and then translate that into precise lip movements on the target video. And this is where things get… interesting.

Hedra: What's the Deal?

So, what is Hedra? From what I gathered in my late-night scrolling, Hedra is positioned as a platform that leverages advanced AI models for creative media generation. The buzz around it often centers on its ability to handle complex visual transformations and to facilitate the creation of unique digital content. The key here is "creative media generation." Does that encompass the intricate art of making one video’s audio drive another video’s facial expressions? That’s the million-dollar question, isn't it?

When you look at the broader landscape of AI video tools, there are different approaches. Some focus on generating entirely new videos from scratch. Others specialize in specific tasks, like adding effects, changing backgrounds, or, crucially, manipulating facial features for lipsync. The question for Hedra is whether it’s a generalist powerhouse that can do all of this, or if it has specific modules or capabilities that are designed for this very specialized form of video manipulation.

My initial impression, and I’m still trying to get a definitive answer, is that Hedra is more focused on generating novel video content based on certain prompts or styles, rather than being a direct video-to-video replacement tool in the way some other specialized AI might be. Think of it this way: if you want to generate a whole new cartoon character singing a song, Hedra might be amazing at that. But if you have a video of, say, a cat, and you want to make its mouth move to a spoken sentence from a different video, that’s a more specific, and frankly, a trickier problem.

The Technical Tango of Lipsync

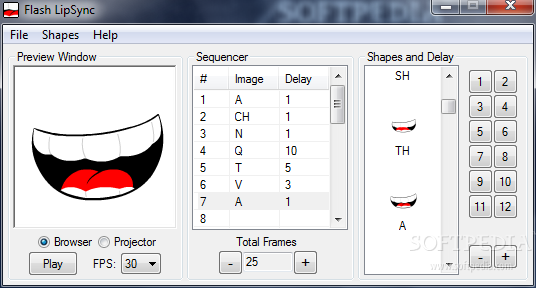

Let’s get a little nerdy for a second, because understanding why this is hard is key to appreciating the tech. Lipsync, even in traditional animation, is notoriously difficult to get perfect. The human mouth is incredibly expressive, and even slight inaccuracies can make something look uncanny, creepy, or just plain wrong. Think about those early CGI movies where the character's mouth looked like a badly glued-on sticker. Shudder.

Now, with AI, we’re asking a machine to do this in real-time or near-real-time, and to do it convincingly. This involves a few critical steps:

- Audio Analysis: The AI needs to break down the audio into its constituent sounds (phonemes). This requires sophisticated speech recognition and acoustic modeling.

- Facial Landmark Detection: On the target video, the AI has to identify key points on the face – the corners of the mouth, the lips themselves, the jawline, etc.

- Mapping and Generation: This is the really complex part. The AI then has to map the identified phonemes to specific facial poses and movements. It’s not just about opening and closing the mouth; it’s about the subtle curves, the tension, the way the lips meet or part for different sounds. This often involves training on massive datasets of people speaking.

- Rendering and Blending: Finally, the AI needs to generate the new facial movements and seamlessly blend them into the existing video, ensuring smooth transitions and avoiding jarring visual artifacts. This is where the "video-to-video" aspect really comes into play. You're not just generating new frames; you're altering existing ones.

So, when we ask if Hedra can do "video-to-video-to-lipsync," we’re asking if it has integrated all of these complex stages into a single, user-friendly workflow. And the truth is, many AI platforms excel at parts of this. Some are fantastic at generating realistic human faces from scratch, others are great at analyzing audio. But the seamless integration of taking an existing video and making its subject speak audio from a different source? That’s a more specialized beast.

Hedra's Sweet Spot (Probably)

My current understanding is that Hedra's strength lies in its ability to generate new visual content, often based on artistic styles or specific creative directives. Think of it as a super-powered creative studio. You might feed it a video as a style reference, or perhaps to extract certain visual elements, but it's less likely to be a direct "take this audio, apply it to this existing video's face" kind of tool out-of-the-box.

Many platforms that do excel at this specific video-to-video lipsync task often have a more focused functionality. They might be explicitly designed for voice cloning and avatar lip-syncing. These tools often require you to upload a neutral talking head video of your target person and then provide the audio, and they'll generate a new video of that person speaking your audio. It's a highly specialized niche, and for good reason – it’s incredibly challenging to do well.

So, while Hedra is undoubtedly a powerful AI tool for content creation, the specific scenario of "video A's audio to video B's lips" is likely not its primary, or even a well-supported, feature. It's possible that with enough clever prompting, or by using Hedra in conjunction with other tools, you might be able to achieve something resembling this. But a direct, one-click solution for video-to-video-to-lipsync? Probably not. And that’s okay! Every AI tool has its strengths, and trying to make them do everything is like asking a chef to also perform open-heart surgery. Not the best idea.

The Deeper Dive: What Can Hedra Do?

Now, don’t get me wrong. Just because Hedra might not be the direct answer to my video-to-video-to-lipsync query doesn’t mean it’s not a fascinating piece of technology. Far from it! If you're into generating unique visual art, creating stylized animations, or perhaps even experimenting with AI-driven storytelling, Hedra sounds like it could be a goldmine.

Imagine feeding Hedra a bunch of your old home videos and asking it to re-render them in the style of Van Gogh, or as a retro 8-bit game. Or perhaps you have a specific character design in mind, and you want Hedra to generate a short animation of that character performing an action. These are the kinds of creative playgrounds where Hedra likely shines. It’s about pushing the boundaries of what’s visually possible with AI.

The beauty of these advanced AI platforms is that they often provide a foundation for innovation. What might not be a direct feature today could become a possibility with future updates, or with the clever work of developers building on top of Hedra’s capabilities. The AI landscape is constantly evolving, and what seems like a limitation now could be a springboard for something entirely new down the line.

So, while my quest for the ultimate AI-driven, video-swapping, lipsyncing machine might not have found its perfect match in Hedra, it’s certainly led me down an interesting path. It’s a reminder that AI is not a monolithic entity. It’s a collection of incredibly specialized tools, each with its own strengths and purposes. And understanding those distinctions is key to not only using them effectively but also to appreciating the incredible progress being made.

The Future of Digital Mimicry

The question of whether you can do video-to-video-to-lipsync on Hedra, while specific, touches upon a much larger conversation about the future of digital media. We're entering an era where the line between reality and digital creation is becoming increasingly blurred. AI is democratizing creative power, allowing individuals to produce content that was once only possible with large teams and expensive equipment.

The ability to manipulate video so precisely, to make individuals say things they never said, or to appear in contexts they never were, raises significant ethical considerations. But it also opens up incredible avenues for artistic expression, education, and communication. It’s a double-edged sword, as so many powerful technologies are.

For now, if you're looking for a dedicated solution for taking one video's audio and applying it to another video’s face with perfect lipsync, you might need to explore more specialized AI tools designed precisely for that purpose. Hedra, from what I can tell, is more about generating unique visual content and artistic transformations. But as AI continues its relentless march forward, who knows? Maybe in a few years, Hedra, or its successors, will be the go-to platform for turning your wildest video-swapping dreams into a perfectly synced reality. Until then, I’ll be here, pondering if my cat’s meows are actually complex algorithms I just haven’t cracked yet. You never know!